Artificial Intelligence is rapidly moving from experimentation to enterprise deployment across regulated industries. Financial institutions rely on AI for underwriting and fraud detection. Healthcare organizations use predictive models to support diagnostics and operational efficiency. Insurance providers deploy automation to streamline claims and risk assessment.

Yet in these environments, AI cannot be adopted casually. It must operate within established compliance frameworks, supervisory scrutiny, and legal accountability structures. Unlike less regulated sectors, innovation here must withstand audit, regulatory inquiry, and legal defensibility.

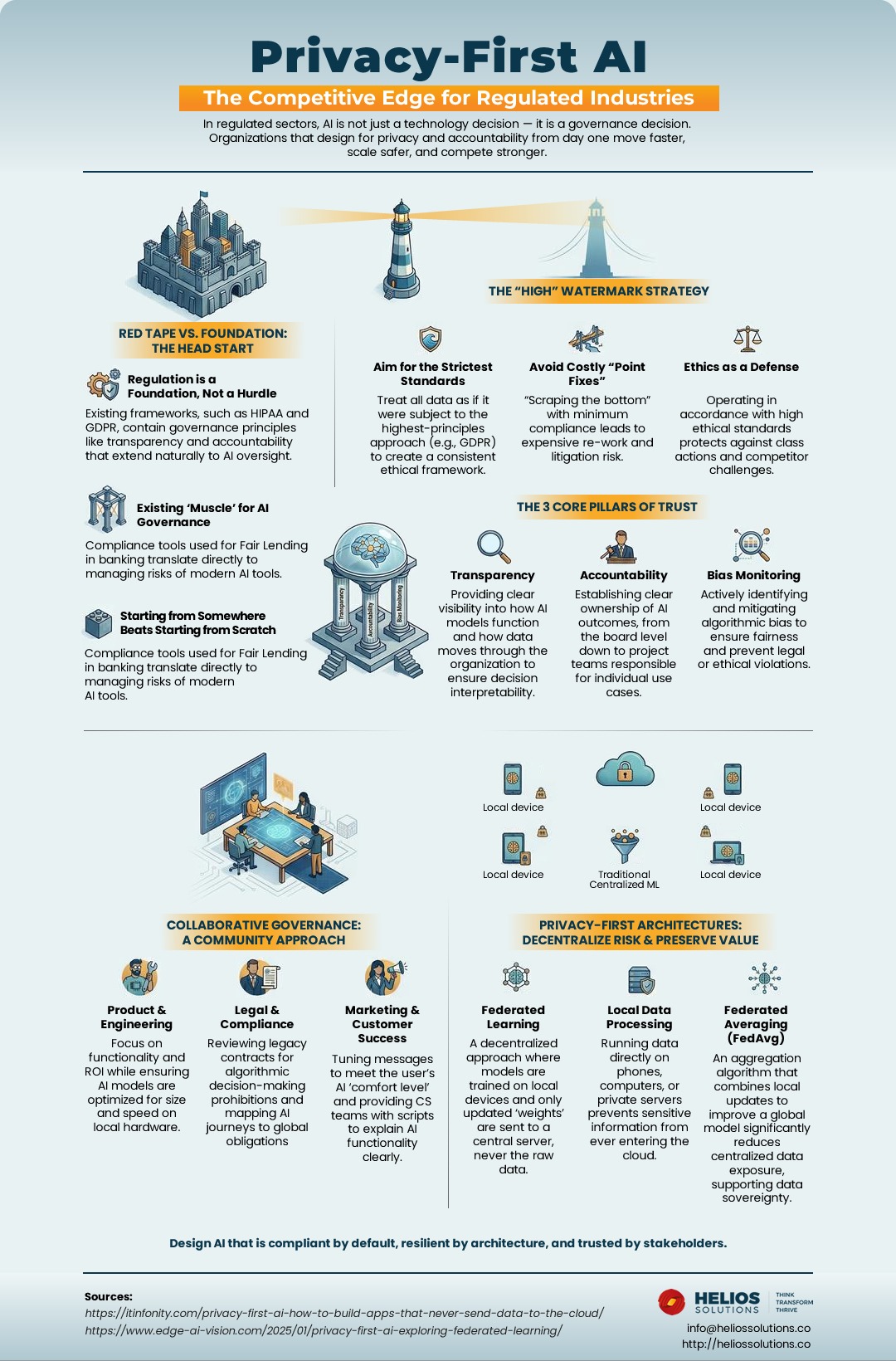

This is where privacy-first AI becomes more than a compliance consideration. It becomes a competitive differentiator.

Organizations that design AI systems with governance, accountability, and architectural resilience from the outset move faster and scale more sustainably. In regulated industries, trust is not optional — it is infrastructure.

Why Privacy-First AI Matters in Regulated Industries

In regulated enterprises, every new technology must align with existing oversight mechanisms. Data protection regulations, sector-specific compliance mandates, and internal risk management frameworks already define how systems are governed. AI introduced into this environment must integrate seamlessly into that structure.

When privacy is treated as an afterthought, organizations often face delayed deployments, costly redesigns, and increased legal exposure. Opaque models raise questions. Unclear data flows create audit friction. Inability to explain decisions erodes stakeholder confidence.

By contrast, a privacy-first approach designs systems to be compliant by default. Governance is embedded in the architecture rather than layered on after regulatory pressure emerges. This shift reduces uncertainty and strengthens long-term scalability.

The High-Watermark Strategy for AI Compliance

One effective approach for regulated enterprises is adopting a high-watermark strategy. Instead of adjusting AI controls by geography or regulatory minimums, organizations design systems to meet the most stringent applicable standards across all operations.

Existing frameworks, such as HIPAA and GDPR, contain governance principles like transparency and accountability that extend naturally to AI oversight. These principles provide a strong foundation for model documentation, data protection, and supervisory review.

Designing to the highest standard creates consistency across the enterprise. It prevents fragmented compliance architectures and reduces operational complexity. More importantly, it avoids reactive “point fixes” that often occur when regulations evolve faster than internal controls.

Minimal compliance may appear efficient in the short term, but it frequently results in expensive rework, retraining, and reputational exposure. A high-watermark strategy embeds discipline early, reducing risk over time.

The Three Pillars of AI Governance

Privacy-first AI operates on three foundational pillars that transform AI from an experimental capability into a governable enterprise asset.

1. Accountability

Accountability ensures that AI systems have clear ownership. Governance must extend from executive oversight to named use-case owners responsible for performance monitoring and risk mitigation. Without defined responsibility, oversight becomes fragmented and ineffective.

2. Bias Monitoring

Bias monitoring addresses one of the most significant risks in regulated AI deployment. Historical data patterns can unintentionally influence model outcomes, creating regulatory and ethical exposure. Continuous evaluation, validation, and remediation processes are essential to ensure fairness and defensibility.

3. Transparency

Transparency converts AI from a “black box” into an auditable system. Enterprises must understand how models are trained, what data sources are used, and how outputs are generated. When decisions can be explained and traced, regulatory scrutiny becomes manageable rather than disruptive.

Together, these pillars create a structure where innovation and oversight coexist.

Regulation as a Strategic Foundation

A common misconception is that AI governance requires building entirely new oversight systems. In reality, regulated enterprises already possess mature compliance infrastructures. Model risk management frameworks, internal audit processes, documentation standards, and supervisory reporting mechanisms are well established in many sectors.

Rather than starting from zero, organizations can extend these existing governance foundations to AI initiatives. Risk committees can incorporate AI review processes. Documentation practices can expand to include model lifecycle management. Audit teams can adapt current controls to new algorithmic systems.

Recognizing this head start transforms regulation from perceived friction into structural advantage. Organizations that leverage existing governance maturity deploy AI with greater confidence and fewer surprises.

Collaborative AI Governance

Effective AI governance is inherently cross-functional. It cannot reside solely within IT or data science teams. Product leaders, legal advisors, compliance officers, and customer-facing teams all influence how AI systems are designed, deployed, and perceived.

Engineering teams must embed privacy-by-design and security-by-design principles directly into system architecture. Legal and compliance teams ensure that AI use cases align with jurisdictional requirements and contractual obligations. Customer-facing teams communicate clearly about how AI systems operate and where human oversight remains.

When governance is collaborative, organizations reduce internal friction and present a consistent posture to regulators and customers alike. Trust is built not only through technical safeguards but through coordinated enterprise discipline.

Privacy-First AI Architecture

Governance principles must be reinforced by architectural decisions. Technical design choices significantly influence the privacy and resilience of AI systems.

Federated learning, for example, enables models to train across distributed data sources without centralizing raw data. An aggregation algorithm that combines local updates to improve a global model significantly reduces centralized data exposure, supporting data sovereignty objectives. When implemented with secure aggregation techniques, this approach lowers privacy risk while maintaining collective learning benefits.

Local data processing further strengthens privacy posture by keeping sensitive information within controlled environments. Processing data directly on devices or private infrastructure reduces unnecessary cloud exposure and narrows potential attack surfaces.

For highly sensitive deployments, differential privacy can add an additional safeguard by introducing calibrated noise to model updates. This reduces the likelihood of reconstructing individual-level data from shared information, enhancing regulatory defensibility.

These architectural decisions demonstrate that privacy is not merely policy-driven; it is engineered.

At-a-Glance Framework

The infographic below distills the key principles and architectural considerations discussed above into a clear visual framework.

It provides a structured view of governance pillars, cross-functional oversight, and the technical foundations that enable privacy-first AI in regulated enterprises.

Turning Compliance into Competitive Advantage

Privacy-first AI does not restrict innovation. It enables sustainable innovation.

In regulated industries, the ability to demonstrate accountability, fairness, and transparency accelerates regulatory approvals and reduces audit friction. It strengthens customer trust and protects brand reputation. It allows organizations to scale AI initiatives without recurring governance crises.

Enterprises that embed privacy and oversight into system design move differently. They do not scramble to justify models under scrutiny. They are prepared.

Over time, this preparedness becomes a structural advantage. While competitors struggle with reactive compliance fixes, privacy-first organizations focus on strategic growth.

Design AI that is compliant by default, architected for resilience, and trusted by stakeholders.

At Helios, we work with regulated enterprises to embed governance, privacy, and accountability directly into AI systems — ensuring innovation scales with confidence.